Preparing for the AI-Driven CVE Tsunami: Why Transparent Prioritization Matters

A tale of two forces — and why neither cancels the other out.

This week, Anthropic launched Claude Code Security, an AI tool that autonomously scans entire codebases for vulnerabilities, suggests human-reviewed fixes, and reportedly uncovered 500+ high-severity vulnerabilities in production open-source projects during internal testing, some of which had gone unnoticed for years.

The reaction was immediate. CrowdStrike and Cloudflare dropped 8%. A total of $15 billion was erased from cybersecurity stocks in a single session on February 20th. Markets interpreted the launch as a disruption signal: if AI can replace vulnerability scanners, what happens to the industry?But that reading misses the real story and the real risk.

⚡ Effect #1 - The Accelerant: AI Is Flooding the

World With Code (and CVEs)

The first effect of AI on cybersecurity is well-documented, but its consequences are still underestimated.

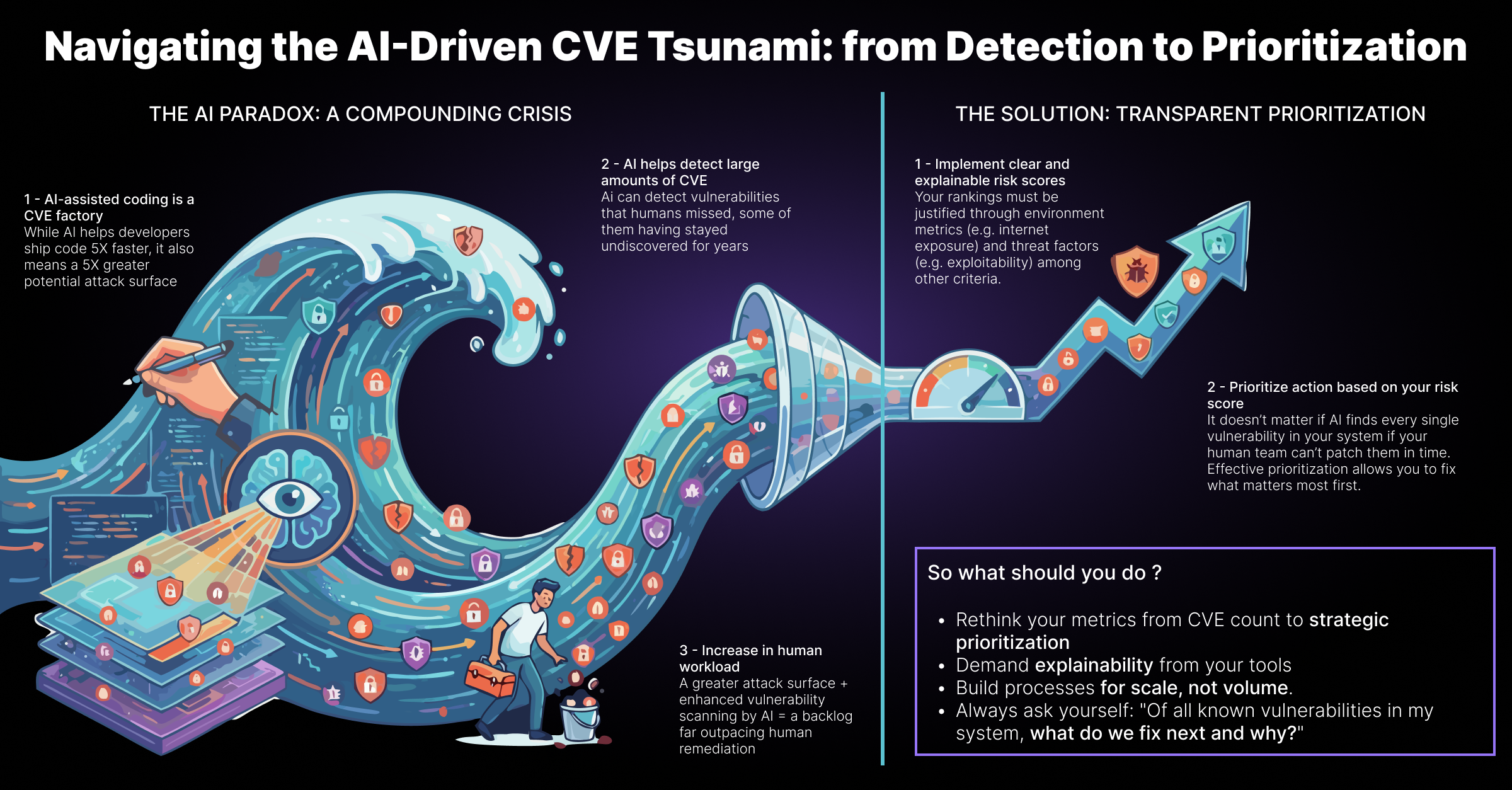

AI-assisted coding is a CVE factory.

When developers ship code 5x faster using Copilot, Claude, or Cursor, they don't ship 5x fewer bugs. They ship 5x more software, with each line of it a potential attack surface. The NVD has already seen record CVE volumes in recent years. Generative AI will push that curve exponentially.

There's more. AI doesn't just create vulnerabilities at scale: it exploits them at scale. Threat actors equipped with AI can now probe environments, craft payloads, and move laterally with machine-speed precision. The asymmetry between attacker and defender has never been steeper.

This is the CVE tsunami: not a gradual tide, but a wave generated upstream, by the very tools that are accelerating software development.

🛡️ Effect #2 - The Defender: AI Detects What Humans Missed

And then comes Claude Code Security.

Unlike traditional SAST tools that rely on predefined rules, Claude Code Security uses large language models to understand data flows and component interactions, thus catching business logic flaws and broken access controls that rule-based scanners routinely miss. It runs self-verification passes before surfacing findings. It presents severity and confidence scores. And crucially, it keeps humans in the loop: no auto-push to prod.

This is genuinely powerful. For security teams drowning in backlog, an AI that can intelligently triage a 3-million-line codebase is a force multiplier. It lowers the detection floor. It democratizes access to senior-level code review.

A legitimate equalizer? Potentially yes.

⚖️ The Paradox: Those Two Forces Don't Cancel Out - They Compound

Here's where the conventional narrative breaks down.

A naive reading suggests: AI creates more CVEs, AI finds more CVEs → balance restored.

The reality is far more unsettling. Both effects accelerate simultaneously. You don't get equilibrium but a faster cycle.

More code shipped → more CVEs generated

More AI scanning → more CVEs discovered

More AI on the offensive → more CVEs actively exploited

The result? Security teams now face an environment where the volume of known vulnerabilities outpaces any human team's ability to remediate. The backlog isn't shrinking; instead it's growing, faster, and under higher pressure.

Discovery is no longer the bottleneck. Prioritization is.

🎯 Why Transparent Prioritization Is the Critical Differentiator

When every tool in your stack can surface hundreds of new findings per scan, the question shifts from "what vulnerabilities do we have?" to "which ones actually matter and how do we justify acting on them first?"

This is where transparency becomes non-negotiable.

A prioritization score is only valuable if it's explainable:

- Why is this CVE ranked critical in my environment specifically?

- Is it because it's actively exploited in the wild? Because it sits on an internet-exposed asset? Because it's linked to a critical business process?

- How do I communicate this to the CISO, to the board, to the audit team?

In an AI-driven world where both threats and findings multiply at machine speed, opaque prioritization is a liability. "Fix these 20 CVEs" with no rationale creates organizational paralysis and erodes trust between security teams and the business.

Transparent, risk-based prioritization of vulnerabilities does the opposite. It creates a shared language between SecOps, IT, and leadership. It turns an overwhelming backlog into an actionable queue. It makes security decisions defensible not just to auditors, but to the organization itself.

🔭 What This Means in Practice

The launch of Claude Code Security is a milestone, not an endpoint. It signals that AI-powered detection is becoming commoditized. What remains scarce - and differentiating - is the ability to make sense of what AI surfaces.

For security leaders, this means:

1. Rethink your metrics. CVE count is noise. Risk reduction velocity is signal.

2. Demand explainability from your tools. If your prioritization engine can't tell you why a vulnerability is ranked the way it is, it's a black box you can't operationalize or defend.

3. Build processes for scale, not volume. The teams that win won't be those who find the most vulnerabilities, but those who fix the right ones, demonstrably faster.

The AI-driven CVE tsunami isn't coming. It's already here. The organizations that navigate it successfully won't be the ones with the best scanner. They'll be the ones with the clearest answer to the question: "Of everything we know about, what do we fix next and why?"

AI is rewriting the rules of vulnerability management. Explainability in risk scoring is no longer simply a “nice to have”, and a healthy prioritization - transparent, risk-based, and actionable - is the new competitive moat for security teams.

💬 What's your take? Is the industry ready to move from detection to intelligent prioritization at scale? Drop your thoughts below.